Data Center HVAC Systems. Data centers have HVAC Cooling Systems that differ from your standard air conditioning system because they cool information technology equipment (ITE), instead of people.

Data centers rely on several interconnected systems.

To understand how these systems work together, see our guide How Data Centers Work.

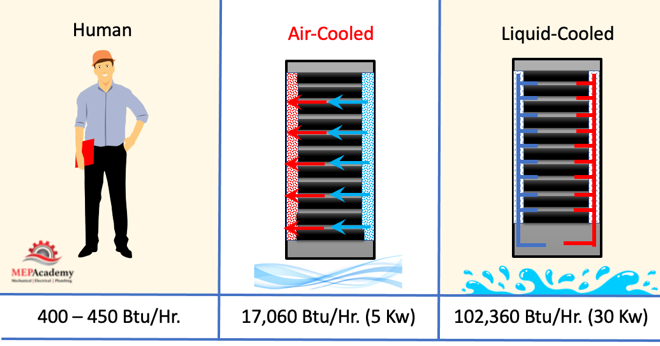

This IT equipment requires much more cooling than a room full of people. The average person sitting gives off 400 to 450 Btu/hour, while one rack of IT Equipment can give off between 17,060 Btu/hour (5 kw) to 102,360 Btu/hour (30 kw). Data centers are energy intensive, and are growing more so.

We’ll explain the various HVAC systems that serve Data Centers, including air-cooled and liquid-cooled IT equipment. We’ll explain the three most popular data center system strategies, such as room, aisle, or in-row cooling. You’ll learn about proper air management in Air-Cooled systems.

With the explosion in growth of the web and social media, the farming of cryptocurrency and online commerce, data centers are in demand to hold all the data that supports these online activities.

Data centers never shut down which is a huge drain on energy, as these facilities run 24 hours a day, 7 days a week, 365 days a year, never taking a break. All those servers and support equipment running continuously causes a large heat load that needs to be removed from the IT Equipment to function optimally.

Racking

All the IT equipment sits on shelfs arranged vertically in a rack. The standard rack height is 7 feet (2.1m). These racks lined up together in neat rows in data centers. The racks house and protect data center equipment such as servers, routers, switches, hubs, and audio/visual components. The data center IT equipment can get very hot, so cooling is required to keep them from overheating and for proper operation. Racks in data centers are either air-cooled or liquid cooled.

Air-Cooled Racks

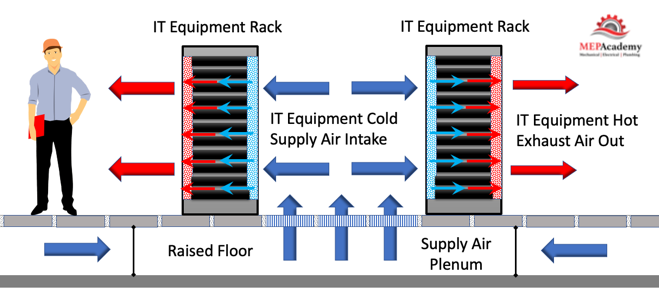

Cold air is brought through the front of the rack, across the IT equipment where it picks up heat, and then the hot air exits the back of the rack.

To increase the efficiency, blanking plates are added to direct the cold air optimally over the IT equipment positioned in the rack, and to keep the warm air from mixing with the cold entering air. It’s important to cover openings within and between racks to avoid wasting energy and directing the cold air where it is needed.

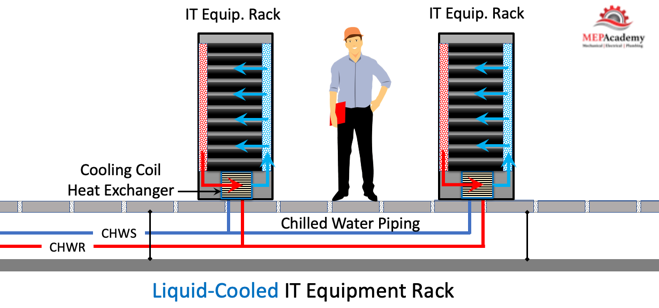

Liquid-Cooled Racks

Liquid cooling works better for racks with power densities between 5kw and 80kw, while the traditional air-cooled rack power densities are between 1kw to 5kw.

There are many different designs for liquid-cooled racks, here are four types.

- Racks with integral coils

- Rear Door Heat Exchanger

- Liquid on-board cooling

- Liquid immersion cooling

Here is a liquid-cooled rack with integral coil or heat exchanger. The cold liquid circulates through a heat exchanger located in the rack. There are small fans that circulate air over the IT Equipment to capture the heat and bring it to the Cold Heat Exchanger, thereby absorbing the heat into the liquid and sending cold air over the IT equipment. This is one type of liquid cooled system, but there are many different versions with each manufacture trying to achieve greater efficiencies with their designs.

Liquid has the capacity to transfer heat up to 4X higher than the capacity of air of the same mass. This makes liquid cooled systems the ideal choice for the ever-increasing heat loads of rack equipment.

We looked at several rack configurations, let’s see how they are organized in the overall data center layout.

Data Center Layouts

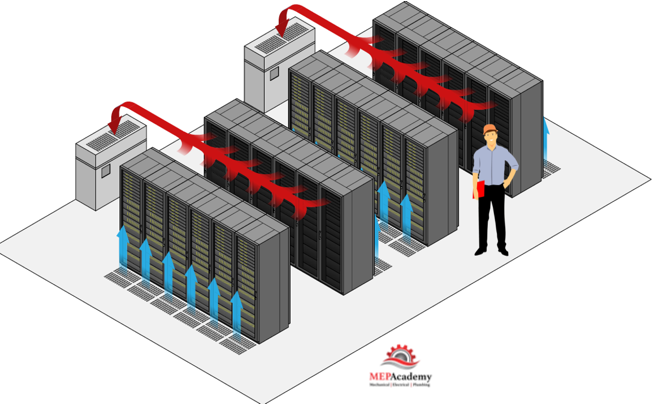

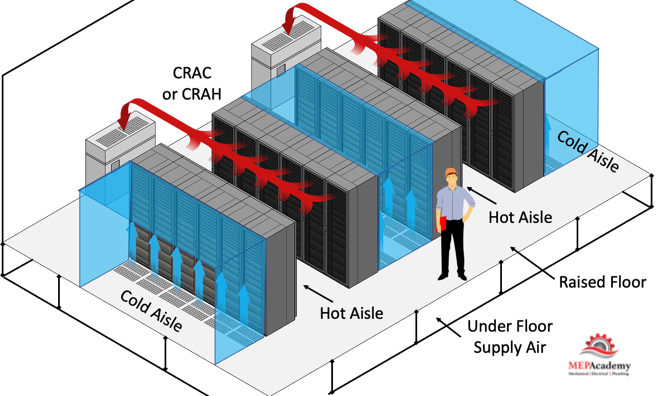

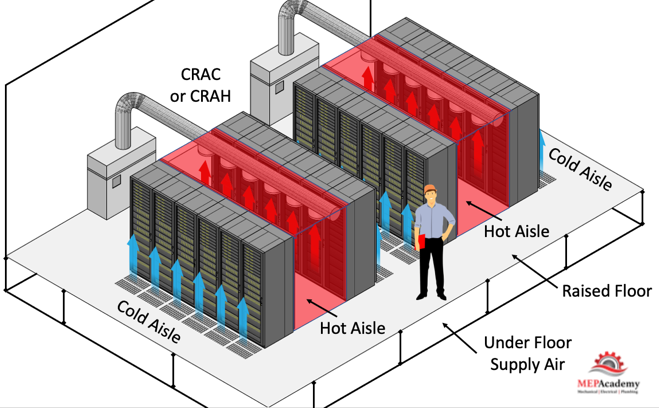

You walk down aisles between racks lined up in rows on both sides in a typical data center. These aisles are either receiving cold air or rejecting hot air from the IT equipment. So, you’re walking down either a hot or cold aisle.

The traditional method was to use no containment of either the hot or cold air within these aisle in the data center. The thinking was to push the supply air up through the raised floor hoping the majority would make it through the rack before mixing with the hot air. With the increase in heat being generated per rack growing, this strategy of uncontained air is inefficient. There are better more efficient solutions, but first let’s explain a little about raised floors.

Raised Floors – Supply Air Plenum

Raised floors are common in larger data centers using air-cooled systems. A raised floor can be supported from 6” to 30” off the main floor to provide a supply air plenum space. The cold air delivered to the underfloor plenum will be supplied to the IT equipment through tiny holes in the floor tiles. Not all floor tiles in the space have these tiny holes in them, but only where needed to provide cold air.

The cold air will flow through these perforated tiles and enter the servers, picking up their heat, causing the heated air to rise above the servers where the return air suction of the HVAC units pulls the warmed air back into the cooling unit. All the server racks are facing the same direction to control the flow of air in one direction.

There are many options for providing the cold air or liquid that is circulated to the racks, here are a few of those.

Data Center HVAC Equipment Types

The most efficient strategy in air-cooled systems is to capture the heat before it mixes with the cold air. This avoids mixing the two air streams, the hot and cold air. There are three common methods of distributing the air to the racks, and that is either room, row or racked based.

Data Center Room Based Design

The most efficient solution is to implement an air management strategy.

Air Management

Proper air management in data centers dictates that you should keep the cold and hot air from mixing. It’s important that the cold supply air enter the heat-generating IT equipment without mixing with the hot exhaust.Heat should be returned to the cooling system without mixing with the cold air.

By separating the supply air from the return air within the space a more efficient system can be created. This containment strategy is better than the traditional non-containment methods.

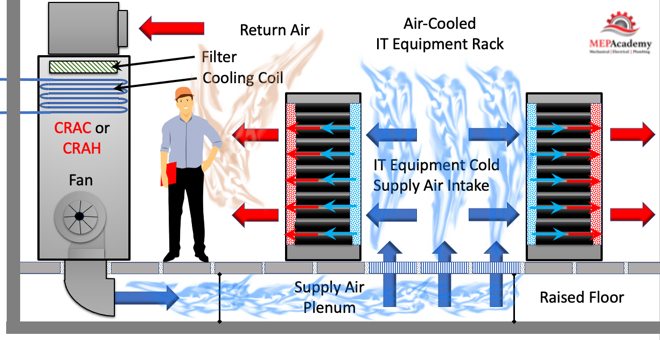

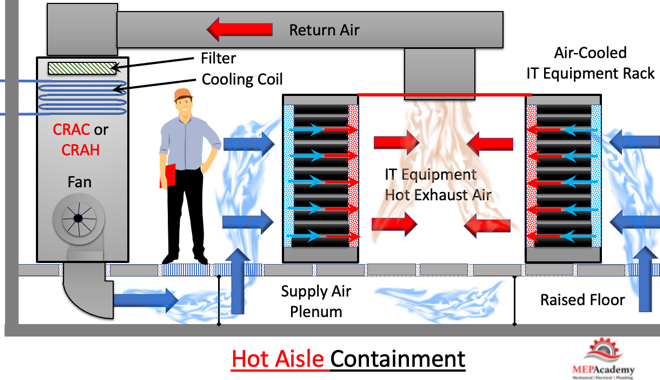

This provides for delivering cold supply air in one aisle and removing warm return air in another. The server racks are arranged so that the cool air flows through them from the cold side through the warm IT equipment and into the warm aisle before returning to the top of the CRAC unit where the return air opening is located.

Underfloor Plenum

Cold air is pressurized in the underfloor plenum causing the supply air to flow through the perforated floor tiles aligned in the cold aisle. Cold air enters the IT equipment racks and absorbs the heat before being discharged into the hot aisle. Warm air from the hot aisle is pulled back to the CRAC or CRAH unit. This transfers the heat from the IT equipment to the DX or Chilled water coil where it will then be expelled outside.

Since the room is completely open with no physical barrier between the supply/cold aisle and the return/hot aisles there are some losses occurring due to mixing of the supply and return air. Hot air will migrate over the rack and be recirculated back into the top front of the rack, causing short-circuiting and a loss of efficiency.

Inefficiency can be resolved by establishing a cold or hot aisle containment strategy. Either of these methods will increase the efficiency of the cold supply air entering the Rack and avoid mixing. Aisle containment improves energy efficiency while allowing for uniform inlet temperatures for IT equipment and avoiding hot spots.

Temperature entering the IT equipment must be set correctly, as too low of a supply air temperature waste energy, while too high of a supply temperature leaves the rack temperature too hot.

When designing High Density data centers, its best to use the Hot Aisle Containment strategy, as insufficient cold air reaches the racks in the CAC arrangement.1

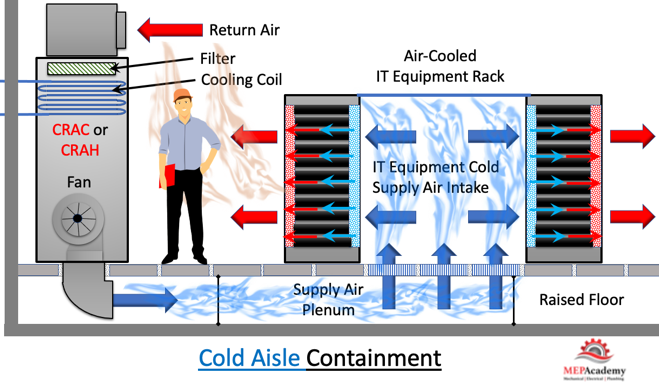

Cold Aisle Containment (CAC)

By isolating the cold air to just the front of the server racks with no opportunity to mix with the return we can increase our efficiency and delivery of the cold supply air to the front of the server racks. By putting a containment barrier on the cold aisle, we can direct the cold supply air to the front of the server where it is most useful.

Cold air has nowhere to go accept through the racks where it picks up the heat from the IT equipment before entering the hot aisle where it will rise and be pulled back to the HVAC equipment. Hot air is not contained within the space.

Using the cold aisle containment method, the cold air is contained within the cold aisle, while the warm return air is allowed to circulate throughout the whole data center. The two air streams are separated by some form of containment enclosure on the supply side.

Hot Aisle Containment (HAC)

Using this strategy, the hot air being exhausted from the racks is contained to just the hot aisle and is pulled into the ductwork or a plenum and sent back to the HVAC equipment without mixing with the cold supply air. This can work with or without a raised floor, as the supply is not contained within the room.

The hot aisle is enclosed keeping the hot air from the IT equipment contained, while the cold air is allowed to circulate throughout the data center, the direct opposite of the cold aisle system.

Computer Room Units

There are several different styles and configurations of computer room HVAC equipment. Some sit on the ceiling, others sit on a raised floor, while others can sit in-row between the Racks and not require a raised floor. Traditionally the two most common HVAC systems for medium to large data centers was either a CRAC unit, that is a Computer Room Air Conditioner or a CRAH, Computer Room Air Handler. These are just a big box containing fans, cooling coils, filters, and options like humidifiers. The two units look similar, it’s’ just the way they cool the air that’s different.

It’s common to find a raised floor system in a data center, where the cold air is supplied to a plenum under the IT Equipment. The HVAC units are strategically located throughout the Data center floor area and provide cold area in a downflow pattern into the open plenum space below the floor.

CRAC vs DX

The difference between the two is that the CRAC units are DX cooled and have a DX condenser outdoors to support the indoor unit. The CRAH unit is provided with chilled water and has a chiller as the source.

The traditional room based cooling systems are reaching the limits of their capabilities in some data centers. Higher density blade servers pack a lot of power in a small space, which means more heat. The room-based systems are designed for lower density racks and simply can’t keep up with the heat load, which can create hot spots.

To address this problem, cooling solutions can be brought closer to the source of the heat, which is generated in the rack. These systems are often referred to as close-coupled cooling systems, which can be used instead of, or in addition to standard room based cooling systems. This would include In-row and In-rack systems.

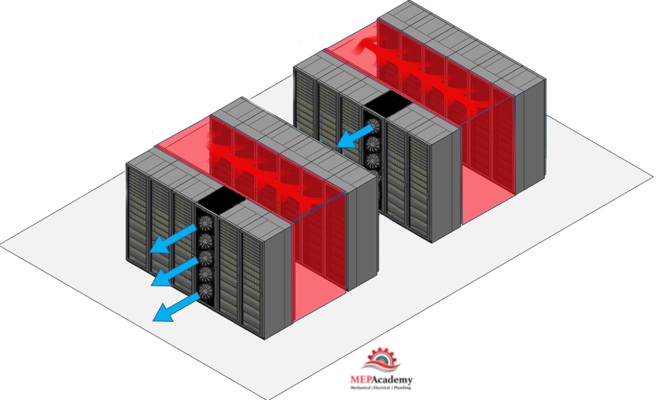

In-Row Cooling Units

In-row cooling units sit between the IT equipment racks and take the hot air from the hot aisle, and cool that air before blowing it into the cold aisle where it gets sucked into the IT equipment racks to cool down the equipment. Each of these In-row CRAC units is dedicated to one row of racks, and despite their name can be installed overhead or under the floor in addition to the in-row versions.

Being close to the racks saves on fan energy and increases energy savings. In-row CRAC units also allow for different cooling capacities per row to handle varying load profiles of the server racks. One row of racks may generate more heat than another because of the type of IT equipment in the rack.

A raised floor is not required for this design which saves money and increases floor load bearing capacity.

The hot aisle must be designed with a roof and doors on the sides to allow access. The roof and sides keep the hot air contained so it doesn’t mix with the cold air. With In-row units the source of cooling is closer to the heat load, minimizing the mixing of hot and cold air streams.

In-row cooling units can be served with chilled water, or they could be self-contained mini air conditioners that only need to be plugged into the 208/240V outlet. For higher density data centers using in-row units, chilled water would be the better solution.

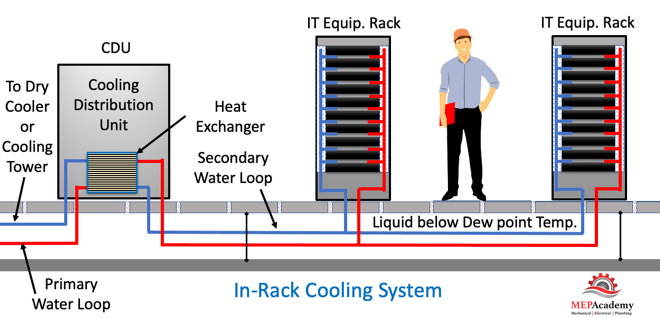

Rack Cooling

There are various rack cooling designs, including directly mounted to the rack or housed within the rack itself. These systems are dedicated to one server rack.

One option is to have self-contained racks that have their own air conditioner, but these are limited in size. For higher densities you’ll have chilled water fed to these rack cooling systems. The rack can have a heat exchanger mounted on the back that absorbs the heat being ejected from the IT equipment. Up to 60 kw per rack can be achieved using this method.

For more information see the link to the government’s energy star article for in-rack cooling.

Energy Star Article: Install In-Row or In-Rack Cooling

There are some data centers that use a combination of the three systems because of the varying densities of load.

Racked based systems are more costly to purchase especially as the power density decreases. But the energy savings for a rack based system will be less annually in electricity cost.

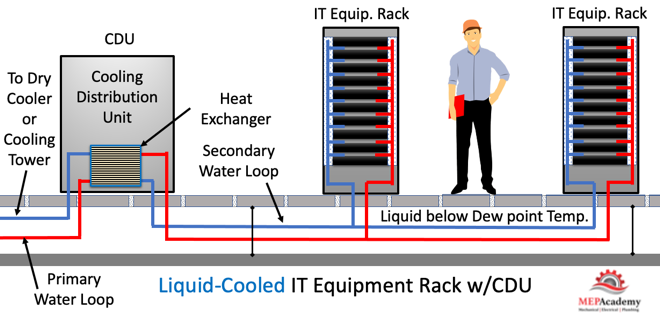

CDU – Cooling Distribution Units

Cooling distribution units provide separation between the IT equipment in the racks and the outdoor heat rejection equipment like a cooling tower or dry cooler. The heat exchanger in the CDU keeps the two water systems separated so they never mix, allowing the liquid circulating in the racks to be unaffected by the water circulated outdoors. Water from the tower is circulated to the primary side of the heat exchanger in the CDU where it absorbs the heat from the secondary water circulating through the racks.

Inside the CDU are redundant pumps that circulate secondary water to various racks.

The CDU provides water to the IT rack equipment that is above the dew point temperature to avoid condensation issues.

CDU’s can be very energy efficient because it avoids the use of refrigeration equipment like chillers and DX coils using compressors. The CDU will use a Dry Cooler or Cooling Tower for heat rejection. With some manufactures you can achieve 5kw (17,060 Btu/hr.) to 30kw (102,360 Btu/hr.) per rack of heat removal.

These systems are usually cost effective compared to most other systems. In case of a leak, they have very small volumes of water in their secondary loops compared to a chilled water system used with other rack cooling strategies.

Data Center Engineering Series

This article is the hub of our Data Center Educational Series, where we break down each major system in detail.

Currently Published

• How Data Centers Actually Work

An overview of how modern data centers operate, explaining the critical electrical, mechanical, and IT infrastructure required to keep servers running 24/7.

• Data Center Power Flow: From Utility Grid to Server Rack

Learn how electrical power travels from the utility grid through switchgear, UPS systems, generators, and distribution equipment before reaching server racks.

• Data Center Cooling Methods Explained

Learn how CRAC units, chilled water systems, and airflow management remove heat from server environments.

• Data Center Redundancy Explained (N, N+1, and 2N Systems)

Understand how redundancy strategies like N, N+1, and 2N designs protect data centers from outages and ensure continuous operation.

• Data Center Refrigerant Economizer

Discover how refrigerant economizer systems improve cooling efficiency by using outdoor conditions to reduce compressor operation and lower energy consumption.

• Data Center HVAC Systems

See our other article on Data Center Refrigerant Economizers, or watch the video on Data Center Refrigerant Economizers.